Despite the many efforts to define standards, despite the Barcelona Principles 1.0 and 2. etc., in practical, day-to-day application, media measurement and evaluation has remained a largely theory-free zone. While that is understandable—communication is complex, and attributing cause and effect remains at best approximate—it doesn’t help with the “evaluation stasis” that Professor Jim Macnamara rightly points to.

I remember a paper I wrote as a communications student in the late 1980s, on the Copernican vs. Ptolemean world views in the communication sciences. Going back to Walter Lippmann’s Public Opinion from 1922, and his analysis of “the images in peoples’ heads,” it discussed the mass media’s relationship with reality. What is the media’s role, its purpose? Is it to reflect and mirror reality—the Ptolemean view, or is it to shape and essentially construct reality—the Copernican view?

The question remains relevant, and in choosing the analogy of the great astronomers, it struck me that the Ptolemean view is one of a black and white world, of clarity and certainty. The Copernican view implies shades of grey, tolerance of different perspectives, and seems more suited to our complex modern reality.

PR practitioners understand the need for proof and empirical validation through analysis, measurement and evaluation. However under pressure to deliver results and meet targets, they will naturally default to the simple (sometimes simplistic) over the complex, to linear media effects models that favor the “hypodermic needle” or “bullet theory” over more complex, multi-causal models.

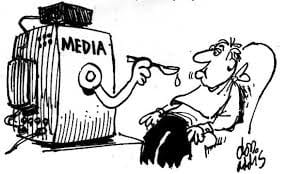

There is talk of message penetration, controlling the narrative and target audiences, and these metaphors betray a linear, organization-centric view where the public merely serves a purpose for PR. But Jim Macnamara states that this “one-way transmissional approach remains common in major corporations and government organizations."

Communicators, as well as marketers, are comfortable in the stimulus-response world of AIDA, that time-honored, almost 100-year-old funnel model where first, you need to generate attention, which through the right communication measures will turn into interest next, then desire, and finally, action: a purchase, a recommendation, a vote. Updated and extended versions may include shared experience and loyalty in their ‘customer journey’ models, or bring together paid, earned, shared and owned media in one system.

Yet they still adhere to the Ptolemean principle of strong, linear media effects, which can be best achieved through mechanical, engineered solutions. This is the ‘reliance on positivist epistemology’ that Alexander Buhmann and Fraser Likely called out in their paper on Evaluation and Measurement in Strategic Communication: “a naïve and one-sided empiricism in which models tend to be taken for granted as true representations of reality.”

In a world of dashboards and ubiquitous data and digital metrics, and overstretched metaphors such as social media influencer, not enough attention is directed at the human and social sides of communication, and the trans- and interdisciplinary theoretical and research work in these areas.

Institute for Public Relations CEO Dr. Tina McCorkindale made that point in an AMEC keynote in 2017 when she declared that we “need to pull in more measures from psychology, sociology, and other “ologies”. … We can further home in on the specific demographics, psychographics, attitudinal and behavioral data to draw insight.” Through its Behavioral Insights Research Center (BIRC), the IPR is driving research to help understand the factors that influence attitude and behavior change to enable effective communication.

Behavior change remains the Holy Grail of PR. The maturity argument of measurement and evaluation states that if you can empirically prove longer-term outcomes of PR activities (and thus justify expenditure), you earn the respect of the C-suite. In a recent paper, IPR Evaluation and Measurement Commission members Jim Macnamara and Fraser Likely outlined that “evaluation requires social science research methods, particularly at outcomes and impact levels … program evaluation tools such as program logic models provide a ‘roadmap’ and a framework to bring consistency to evaluation.”

This is critical, and reflected in AMEC’s Integrated Evaluation Framework (especially the sections on outcomes and organizational impact). And it is equally critical that PR practitioners, measurers, evaluators and insight-generators are grounded in the history, present and future of communication science and the wide array of disciplines that contribute to the field’s continuously expanding body of knowledge.

Evaluation and measurement is often categorized under media intelligence. Intelligence is both the collection of information, and the ability to acquire and apply knowledge and skills. The burgeoning and evolving field of PR, including its measurement and evaluation arm, will do well in paying more attention to the latter and adopting a more holistic worldview that welcomes complexity, open debate and interdisciplinary learning. We shouldn’t abandon the KISS principle, but we need to acknowledge that communication is no simple matter. I’m sure Nicolaus Copernicus would concur.

Takeaway: three steps to make media intelligence more intelligent:

- Treat PR as a social and a human science

Edward Bernays, the so-called father of PR, famously regarded PR as an applied social science. To understand communication, we also need to look at it as a human science, and consider insights from psychology and the neurosciences, for example.

- Lead with (and be led by) data and knowledge

Research to accompany and validate PR activities should be carried out at all process stages, in planning (formative research), during execution (process research), and after completion (summative analysis). Data and insights should be grounded in theory and models that can guide the use of both quantitative and qualitative approaches.

- Apply program theory and logic

Work done by AMEC and the IPR Commission on Evaluation and Measurement in recent years (Macnamara & Likely 2017) advocates strongly the use of program theory and program logic. These models provide a framework to guide measurement and evaluation before, during and after a specific activity. They also serve as performance measurement systems that connect PR evaluation to established management processes (see example UK Government Communication Service)

Thomas Stoeckle is a visiting lecturer at Quadriga University. He is an adjunct professor at the University of Florida College of Journalism and Communications and a member of the IPR Commission on Evaluation and Measurement.